The 'transpose' of a matrix is often referenced, but what does is mean?

It sure has an algebraic interpretation but I do not know if that could

be expressed in just a few words. Anyway, I rather do a couple of

examples to find out what the pattern is.

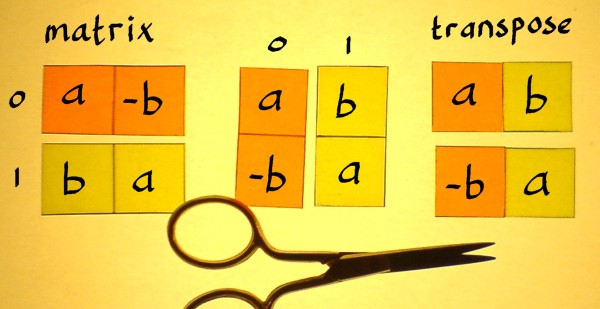

Below is a 2x2 matrix like it is used in complex multiplication. The

transpose of a square matrix can be considered a mirrored version of

it: mirrored over the main diagonal. That is the diagonal with the a's

on it.

|

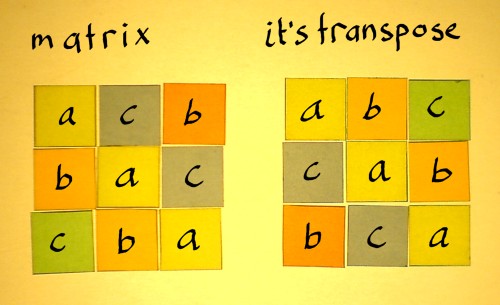

For a square matrix of any size, the same principle would hold. Just

imagine that the main diagonal is a line over which the entries are

flipped.

|

Although the 'flip-over-the-diagonal' representation helps to

introduce the topic, it does not satisfy me. A matrix can be considered

a set of vectors, organised as rows or columns. Then, transposition can

be expressed:

the rows of a matrix become the columns in it's transpose |

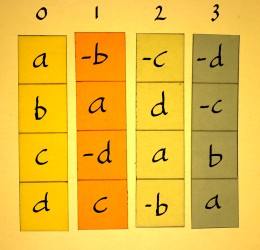

The same applies to bigger matrices. Note that the middle figure is

already the transpose, but it is still shown as columns. The rightmost

figure accentuates the rows of the transpose. And that is how it will

be used in practical applications.

|

|

|

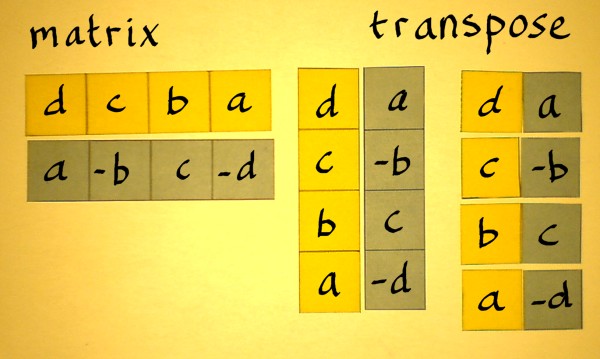

The vector-cut-and-paste-representation shows that non-square

matrices have a transpose as well. Below is a block-matrix example that

may show up a few more times on my pages.

|

Still the question is: what is the point of a transpose, in the

algebraic sense? I can only illustrate the significance of a transpose

by means of the simplest examples. Here again, is a 2x2 matrix as it

could be part of complex multiplication. Note that such matrices

already have a symmetry that arbitrary matrices do not nessecarily have.

|

Multiplication with a 'unit puls' is done to find the responses of the matrix and it's transpose.

|

|

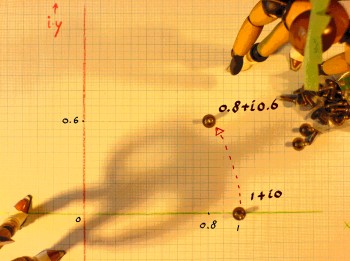

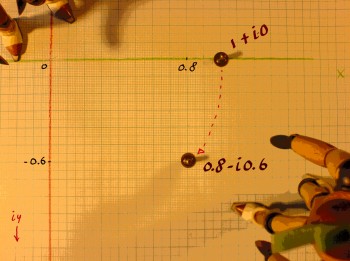

These operations can be visualised on the complex plane:

|

|

The first matrix rotates in anti-clockwise direction, and it's

transpose rotates in clock-wise direction. Such couples which are

mirrored over the x-axis are called 'complex conjugates'. For bigger

matrices than 2x2, such visualisations cannot be done. But the effect

of matrix transposition in general can be considered a reversal of the

rotations in it.

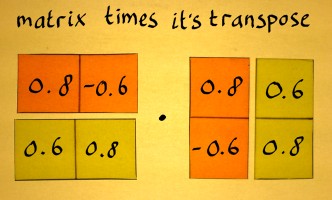

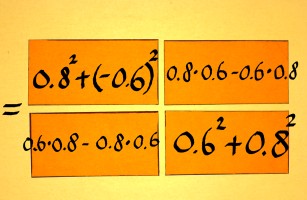

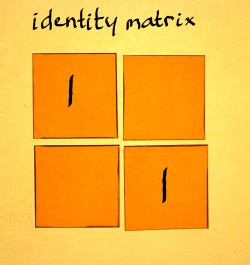

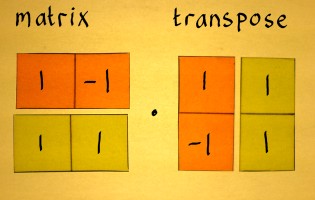

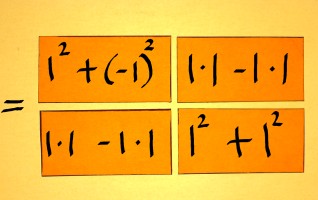

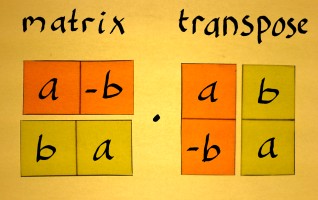

Let us now check what will happen if this matrix and it's transpose are

multiplied with each other.

|

|

0.82+0.62 = 0.64+0.36 = 1, and

(0.6*0.8)-(0.8*0.6) is zero. Therefore we have a quite special result

for this case: the identity.

|

I have deliberately chosen a matrix whose transpose equals the

inverse.

Note that this is not regularly the case with transposes of just an

arbitrary matrix. It is only the case with so-called 'orthonormal'

matrices. Like with real numbers, when you multiply a matrix with it's

inverse the result is an identity. Compare with multiplicative inverses

like:

1*(1/1)=1 or 4*(1/4)=1.

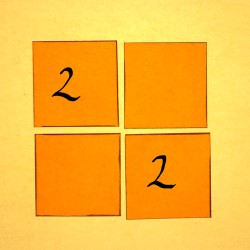

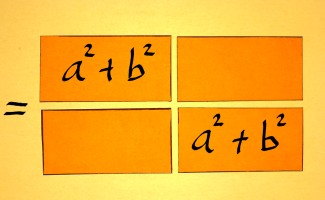

Below, is a matrix whose transpose is not the inverse. When these

are multiplied the result is not an identity matrix.

|

|

Still, the output shows a nice regularity. There is just another

constant on the identity diagonal.

|

All 2x2 matrices of the type that appear in complex multiplication

show this constant-diagonal result when multiplied with their

transpose. For this type of matrix there will always exist an inverse.

Therefore complex numbers and aggregates of these are favourites in dsp

technique. They offer systematic control over data transforms, and the

option to reverse a process quite accurately, if needed.

|

|

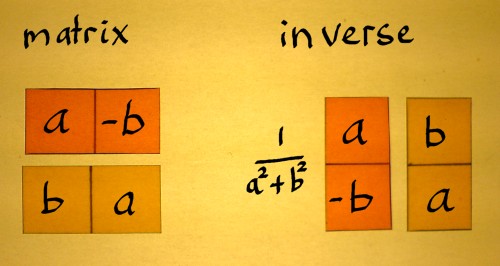

On this page I have illustrated how multiplication of a matrix with

it's inverse results in an identity matrix. But I did not indicate how

the inverse of a matrix can be found. For the above-mentioned type of

matrix that is easy. It actually means to find the inverse of the

complex number represented in it. Here is how to proceed:

|

First find the transpose. The transpose of a complex number (a+ib)

is it's conjugate (a-ib). Subsequently you divide by a2+b2.

Which is the radius (or 'norm') squared. The whole thing could be

written:

And now the inverse of other and bigger matrices please? Ehhhhm....

stay in tune.